Idan Schwartz

Assistant Professor

Bar-Ilan University

Research & Bio

I'm an Assistant Professor at Bar-Ilan University, leading the Multimodal Lab.

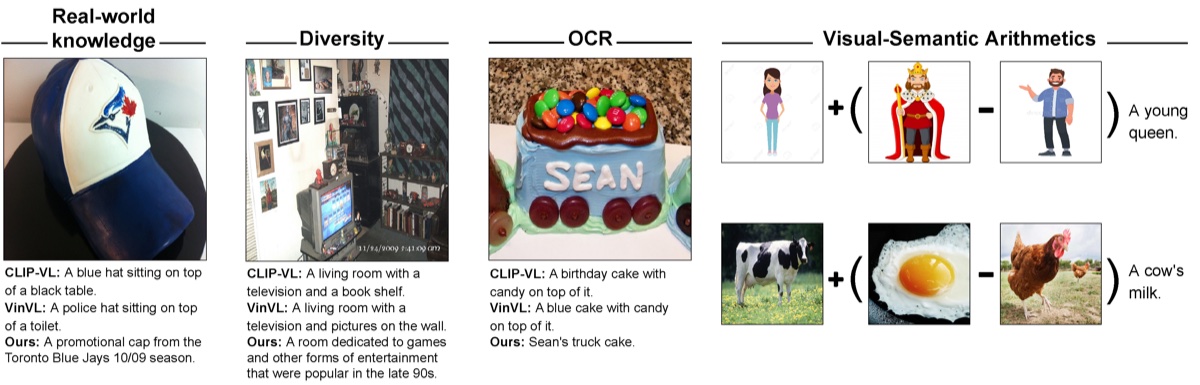

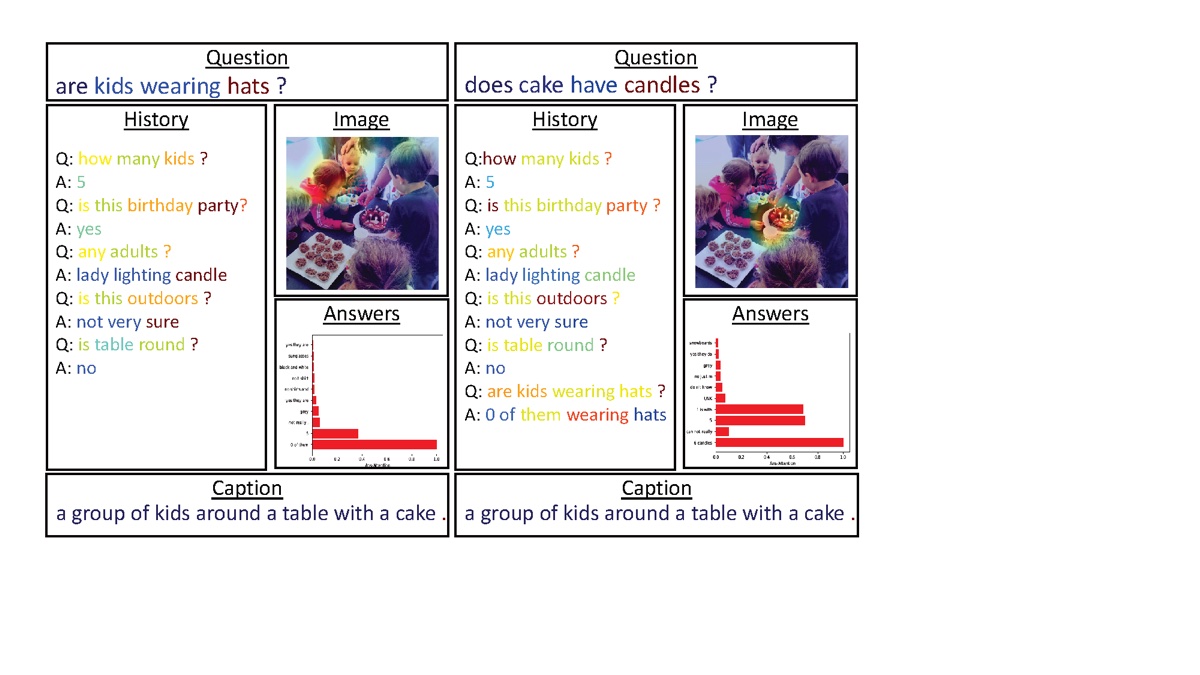

My research focuses on multimodal problems, primarily generative, with challenges including modal alignment and efficient inference-time solutions.

I also have a strong interest in connecting ideas from cognitive science decision making to deep learning concepts, such as model perceptiveness, representation as comprehension, attention, and programming as system 2 problem solving.

I completed my postdoc with Prof. Lior Wolf at Tel Aviv University. Before that, I earned my PhD in Computer Science from the Technion, under the supervision of Prof. Tamir Hazan and Prof. Alexander G. Schwing from UIUC. My thesis focused on Cognitive Models in Deep Learning. You can find my thesis here.

My experience as a researcher in industry includes work on eBay's catalog (vision and language), Microsoft's Assistant (meeting insights, transcript-based), and at Spot for cloud workload optimization platform (time-series prediction). I currently serve as Chief Scientist at Aigency.ai, where I'm helping revolutionize the internet in the age of intelligent agents.

Interests

- Artificial Intelligence & Cognition

- Attention Models

- Multimodal Learning

- Computer Vision

- Natural Language Processing

Education

-

Postdoc in Computer Science, 2023

Tel-Aviv University

-

PhD in Computer Science, 2022

Technion

-

BSc in Computer Science, 2015

Technion